The U.S. National Science Foundation has invested in fundamental robotic research for decades, continuously pushing the boundaries of exploration, innovation and productivity.

Whether fully autonomous or in close collaboration with humans, robots are becoming more prevalent throughout people's lives, from the factory floor to the operating room to space exploration.

This page lists funding opportunities that may be of interest to researchers in robotics and robotics-related disciplines.

On this page

On this page

Foundational Research in Robotics

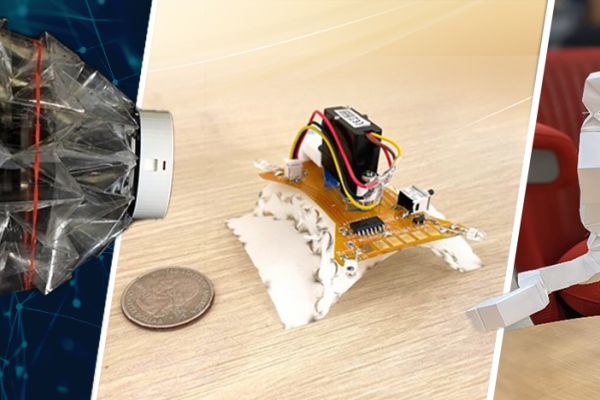

NSF's Foundational Research in Robotics (FRR) program supports research on robotic systems that exhibit significant levels of both computational capability and physical complexity.

Robotics is a deeply interdisciplinary field, combining advances in engineering with innovations in computer science. For the purposes of this program, a robot is defined as intelligence embodied in an engineered construct, with the ability to process information, sense, plan and move within or substantially alter its working environment.

Robotics-related programs

Supports startups and small businesses to translate research into products and services, including robotics, with the potential to transform their industries and make a positive impact on society.

Learn more about America's Seed Fund and robotics.

Supports research on engineered systems with a seamless integration of cyber and physical components, such as computation, control, networking, learning, autonomy, security, privacy and verification, for a range of application domains.

Learn more about Cyber-Physical Systems.

Supports fundamental engineering research that improves the quality of life of persons with disabilities. Projects advance knowledge regarding a specific disability, pathological motion or injury mechanism

Learn more about Disability and Rehabilitation Engineering.

Supports fundamental research on the modeling, analysis, diagnostics, measurement and control of dynamical systems, including but not limited to those involving physical interaction between human and embodied artificial intelligences.

Learn more about Dynamics, Control and Cognition.

Supports research on electric power systems, power electronics and drives, battery management systems, hybrid and electric vehicles, and understanding the interplay of power systems with associated regulatory and economic structures and with consumer behavior.

Learn more about Energy, Power, Control, and Networks.

Supports interdisciplinary research in human-computer interaction to design technologies that amplify human capabilities and to study how human, technical and contextual aspects of computing and communication systems shape their benefits, effects and risks.

Learn more about Human-Centered Computing.

Supports social scientific studies on the connections between law and law-like systems of rules, law and human behavior and studies on how science and technology are applied in legal contexts.

Learn more about Law and Science.

Supports fundamental research on the behavior of deformable solid materials and structures under internal and external actions.

Learn more about Mechanics of Materials and Structures.

Supports theoretically motivated research aimed at increasing understanding of human perception, action and cognition and their interactions.

Learn more about Perception, Action & Cognition.

Supports computational research in artificial intelligence, machine learning, computer vision, human language technologies and computational neuroscience.

Learn more about Robust Intelligence.

Supports historical, philosophical and social scientific studies of the intellectual, material and social aspects of STEM, including ethics, equity, governance and policy issues relating to scientific theory and practice.

Learn more about Science and Technology Studies.

Supports research to develop fundamental knowledge about principles, processes and mechanisms of learning and about augmented intelligence — how human cognitive function can be augmented through interactions with others and technology.

Learn more about Science of Learning and Augmented Intelligence.

Supports the development of new methods that intuitively and intelligently collect, sense, connect, analyze and interpret data from individuals, devices and systems.

Learn more about Smart Health and Biomedical Research in the Era of Artificial Intelligence.

Education and learning programs

Supports research on the design, development and impact of STEM learning opportunities and experiences, including those involving robotics, for the public in informal educational environments.

Learn more about Advancing Information STEM Learning.

Supports research and development, including projects involving robotics, to enhance STEM learning and teaching for preK-12 students.

Learn more about Discovery Research PreK-12.

Supports early-stage research in emerging technologies such as AI, robotics and immersive or augmenting technologies for teaching and learning that respond to pressing needs in real-world educational environments.

Learn more about Research on Innovative Technologies for Enhanced Learning.

Partnerships

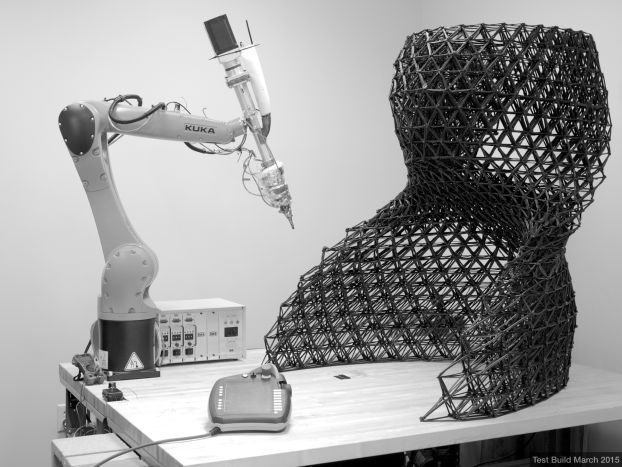

Accelerates the impact of basic research in various focus areas, including robotics and advanced manufacturing, through close relationships between industry, academic teams and government.

Learn more about Industry-University Cooperative Research Centers.

An immersive, entrepreneurial training program that connects the technological, entrepreneurial and business communities to accelerate discoveries from the lab to the marketplace.

Learn more about Innovation Corps (I-CorpsTM) .

Featured news

Additional resources

Subscribe to NSF's robotics listserv

Email listserv@listserv.nsf.gov with the message "subscribe robotics [your name]" to be notified of the latest NSF robotics news, funding opportunities and events. Please leave the subject line blank and remove any signatures, attachments or images.