We advance computing in areas of national priority — from artificial intelligence to quantum computing, cybersecurity to next-generation communications — while ensuring these technologies are safe, trustworthy and improve our world.

Whether it's developing new computer and information science and engineering research ideas, building community infrastructure so people can do their research more effectively, or creating the next-generation workforce, NSF helps the U.S. remain at the forefront of science and technology in a way that supports all of society.

What we support

Advanced cyberinfrastructure

We support the development and operation of state-of-the-art cyberinfrastructure resources, tools and services essential to the advancement and transformation of science and engineering.

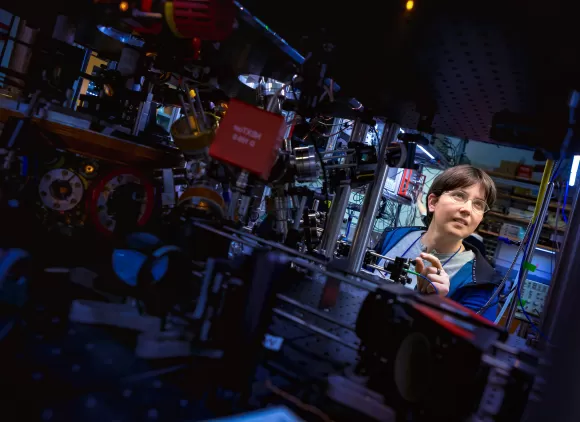

Computing and communication foundations

We support foundational research in computing, communication, hardware, software and emerging technologies such as quantum information science.

Visit the Division of Computing and Communication Foundations

Visit the Division of Electrical, Communications and Cyber Systems

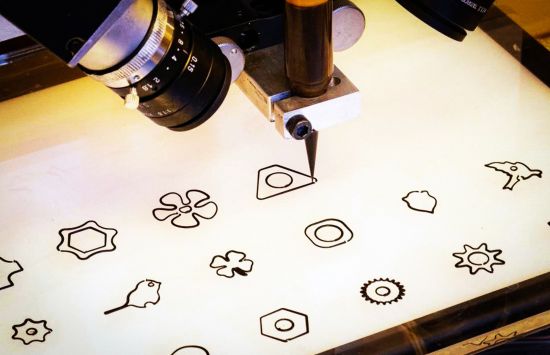

Computer and network systems

We support new computing and networking technologies, while ensuring their security and privacy, as well as new ways to make use of current technologies.

Visit the Division of Computer and Network Systems

Visit the Division of Electrical, Communications and Cyber Systems

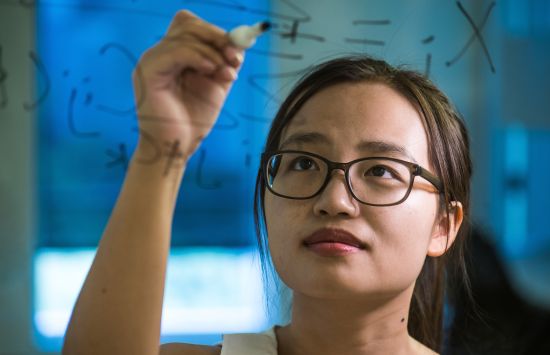

Information and intelligent systems

We support research on the interrelated roles of people, computers and information to advance knowledge of artificial intelligence, data management, assistive technologies and human-centered computing.

Featured news

Educational resources

View lesson plans, activities and multimedia for K–12 audiences that focus on computer and information science and engineering.

View the resources